This is the first of a three-part article series divided into aviate, navigate and communicate sections.

I was introduced to my first flight director in 1979 and thought it was a cosmic computer that could think faster than me and was a sure sign of things to come. I later learned it didn’t think at all, but it did herald changes in the near future. That early flight director was in the Northrop T-38 Talon, and it wasn’t really a computer as we think of them today since it simply took analog signals and turned them into the movement of mechanical needles. It did some cosmic things, but it wasn’t a computer.

A modern computer deals with data in the digital world. I never really had a computer in the cockpit until I was introduced to my first inertial navigation system (INS). That’s when I learned to appreciate the advantages of the digital age to come, as well as some of the pitfalls. We learned early about the “garbage in, garbage out” phenomena of computer data.

In 1989, I was flying an Air Force Boeing 747 between Anchorage, Alaska, and Tokyo, using what is now called North Pacific (NOPAC) R220, the northernmost route. I was especially concerned with navigation on this flight since the Soviet Union didn’t want any U.S. Air Force airplanes anywhere close to its facilities in Petropavlovsk on the Kamchatka Peninsula and we would be flying within about 100 nm. This was just six years after the Soviets shot down another Boeing 747 flying in the same area, the infamous Korean Air Lines Flight 007 incident. Perhaps “concerned” is an overstatement.

Three Delco Carousel INSs, commonly called the “Carousel IV,” as well as two navigators were onboard. The Carousel was standard equipment back then for many Air Force transport aircraft, as well as civilian Boeing 747s. So, what could go wrong? The Carousel IV’s biggest limitation was that it could only hold nine waypoints and each had to be entered with latitude and longitude using a numeric keypad. Our navigators would load up all nine waypoints and once one of them was two behind us, they would program the next one to take its place.

Waypoint entry seemed simple at the time. You selected “Way Pt” from a knob, moved a thumbwheel to the desired waypoint number, hit the number key for the cardinal direction (2=N, 6=E, 8=S or 4=W) and then typed in the coordinates. Our lead navigator carefully inputted the first nine waypoints before we departed, and I dutifully checked each. Another crew took our place after departure and after passing waypoint 4, the new navigator entered new waypoints 1, 2 and 3. Passing waypoint 8, we did another crew swap and as I was getting settled in my seat the aircraft turned sharply to the right, directly toward the Kamchatka Peninsula. I clicked off the autopilot and returned to course as the navigator asked, “Pilot, why did you turn right?” After a series of recriminations followed by another series of “It wasn’t me” claims, we figured it out. The new waypoint 1 was entered as N51°30.5 W163°38.7, which resolves to a point along the Aleutian Islands, behind us and to the right. The second navigator confessed that he had spent most of his career punching in “W” for each longitude and while his eyes read “E163°38.7” his fingers typed “W163°38.7” instead.

If you are flying something more modern than a Carousel IV INS, chances are you don’t have to manually type in coordinates. Even if you do, chances are you have a better keyboard that doesn’t require using a numeric keypad for letters. So, what can go wrong in your high-tech “idiot-proof” airplane? Lots. Even in our digital world, the “garbage in, garbage out” problem can have dire implications. It remains our primary duty as pilots to aviate, navigate and communicate.

Aviate

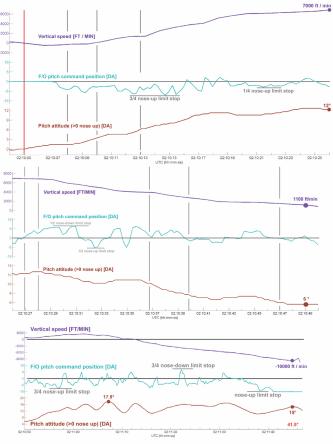

The tragic end to the June 1, 2009, flight of Air France 447 has become a standard case study for aircraft automation and crew resource management (CRM) researchers. The captain was in back during an authorized break and two first officers were left alone in the cockpit. A momentary loss of pitot/static information due to ice crystals at 35,000 ft. caused the autopilot and autothrust to disengage. The pilots did not have any airspeed information for 20 sec. before at least one instrument recovered. The pilot flying (PF) pulled back on the stick and raised the pitch from around 2 deg. to 12 deg., climbing 2,000 ft. and stalling the aircraft. The pilot not flying (PNF) took control, but the PF took control back. In this Airbus A330, control “priority” was taken by pressing a button on the sidestick. An illuminated arrow in front of the pilot turns green to indicate which stick has priority, but in the heat of battle, the PNF thought he had control when he didn’t. Four and a half minutes later, the aircraft hit the ocean, killing all of the 240 crew and passengers on board. The last recorded vertical velocity was -10,912 fpm.

Much has been made about the lack of sidestick feedback that allowed one pilot to pull back while the other pushed forward, making it hard to discern who had control without proper callouts or a careful examination of the flight instruments. Human factors scientists also have made note of the startle factor and other forms of panic that handicapped the two inexperienced pilots. The first officer flying had less than eight years of total experience and around 3,000 hr. of total time.

Very few of us who have graduated to high-altitude flight have spent much time hand-flying our aircraft where the high- and low-speed performance margins of our aircraft narrow. After accidents like these there are often cries for more hand-flying, ignoring the regulatory requirements for using autopilots in Reduced Vertical Separation Minima (RVSM) airspace or the hazards with having unbelted crew and passengers while an inexperienced pilot is maintaining the pitch manually. I’m not sure more hand-flying would have cured the problem here. A quick look at the pilot’s control inputs reveals a fundamental lack of situational awareness.

Pilots with considerably more experience flying large aircraft at high altitude will recognize the problem immediately. Before losing airspeed information, the autopilot was flying the aircraft with the pitch right at about 2.5-deg. nose-up. This is fairly standard for a large aircraft at high altitudes, but it isn’t universal. The first officer flying this Airbus pitched up to 12-deg. nose-up until told by the other first officer repeatedly to “Go back down.” He then raised the nose to as high as 17-deg. nose-up and never lowered it to below 6-deg. nose-up. Even his minimum pitch level may have been too high to sustain level flight at their altitude.

We can allow the automation and human factors experts to investigate fixes to the computers and ways to improve the CRM. But there is a more basic fix to these kinds of flight data problems. We as pilots need to understand how to fly our aircraft as if the automation isn’t there. That means knowing what control inputs will create the desired performance.

Here is a quiz you should be able to pass with flying colors:

(1) What pitch and thrust setting is needed to sustain level flight at your normal cruise altitudes and speeds?

(2) What pitch is necessary to keep the aircraft climbing right after takeoff with all engines operating at takeoff thrust?

(3) What pitch is necessary to keep the aircraft climbing right after takeoff with an engine failed?

The answers were drilled into us heavy aircraft pilots many years ago, before the electrons took over our instruments. A blocked pitot tube can make an altimeter behave nonsensically, so pilots were schooled to memorize known power and pitch settings. Most large aircraft will lose speed if the pitch is raised above 5-deg. nose-up at high altitudes. After takeoff with both engines operating, the answer is likely to be 10-deg. nose-up or a bit more for some aircraft. With an engine failed, you might lose a few degrees. Of course, these numbers change with aircraft weight and environmental conditions, but you should have a number in mind.

Comments